前言

之前的博客中我介绍了Kalman滤波器,这个算法被广泛用于多目标跟踪任务中的行人运动模型。然而实际场景中存在有很多相机运动,仅仅依赖行人运动模型是不够的。这次我主要介绍下相机运动模型,以对极几何和ECC为主。完整的代码和示例我都放在了github。

1 多目标跟踪中的相机运动

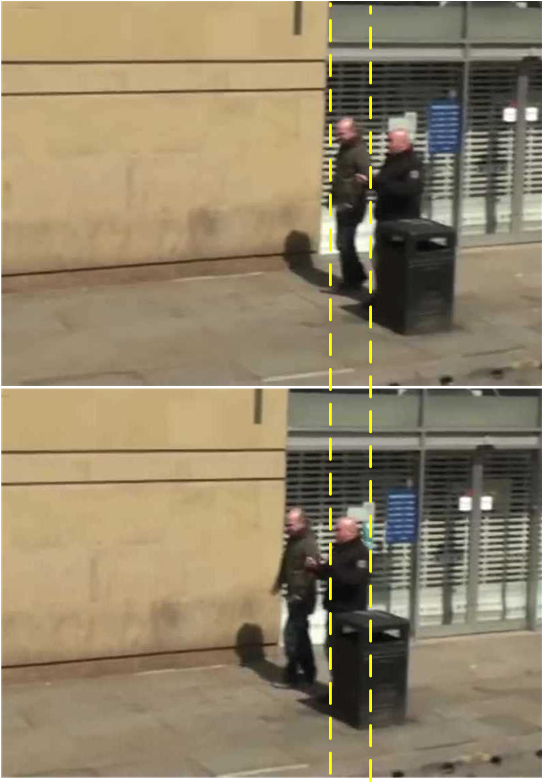

在多目标跟踪场景中往往存在有复杂的运动模式,这些模式除了行人这类非刚性运动,还有相机这类刚性运动。以MOT Challenge数据集为例,其中就存在大量相机运动场景,甚至超过了静态相机场景数。比如MOT17-13号视频中车载相机在车辆转弯时对于两个运动速度较慢行人的视角:

我们从示意图可以看到,由于车辆转弯速度很快,上一帧的行人位置映射到下一帧就变成了另一个的位置。因此相机运动对于多目标跟踪的影响很大,尤其是仅依赖运动信息的模型,相机的运动会严重干扰运动模型。

2对极几何

2.1 对极几何模型

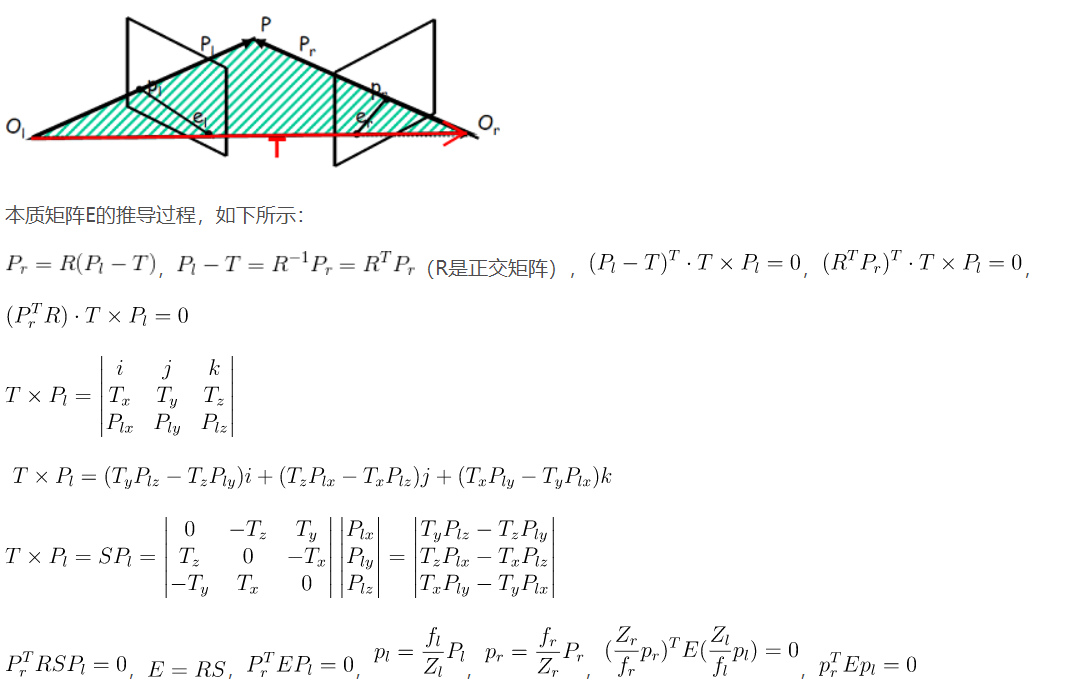

关于相机运动方面的知识,我在之前介绍单目深度估计中的无监督模型时介绍过,即将变化差异不剧烈的两帧画面近似看作不同相机视角下同一场景的画面,也就是对极几何,这一点可以看看《计算机视觉中的多视几何》中关于相机几何方面的知识:

不过这里我需要先解释一下一些概念,以方便后续模型的讲解:

- 基线[baseline]:直线CC’为基线。

- 对极平面束[epipolar pencil]:以基线为轴的平面束。

- 对极平面[epipolar plane]:任何包含基线的平面都称为对极平面。

- 对极点[epipole]:摄像机的基线与每幅图像的交点。比如,上图中的点x和x’。

- 对极线[epipolar line]:对极平面与图像的交线。

- 5点共面:点x,x’,摄像机中心C、C’,空间点X是5点共面的。

- 极线约束:两极线上点的对应关系。

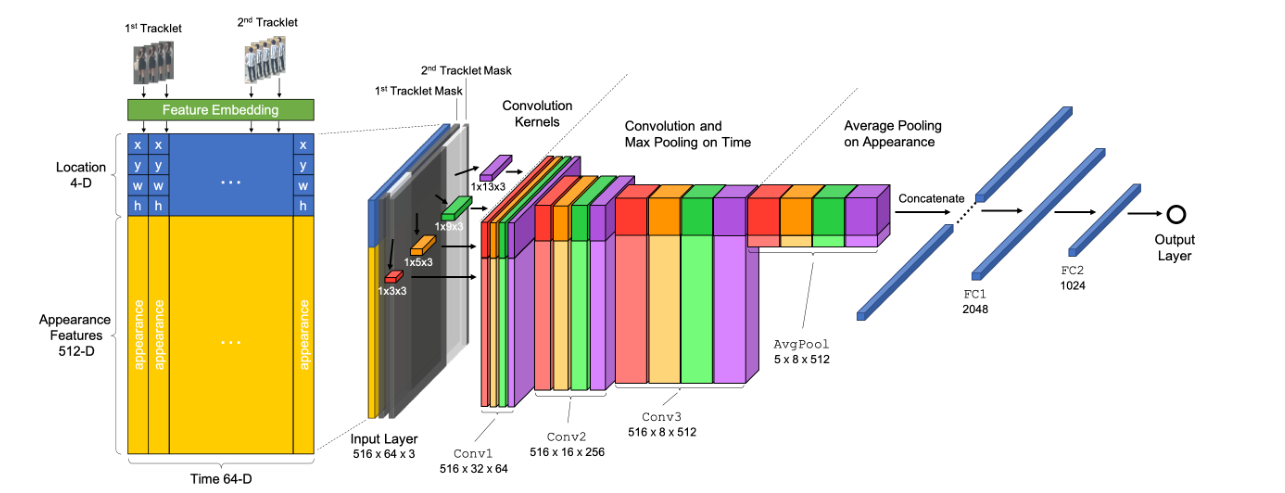

接下来,我们首先看一篇ACM MM2019的论文TNT[1],这是一篇研究端到端运动信息和表观信息结合框架的论文:

不过这里我们要讲的是其提出来的相机运动模型:

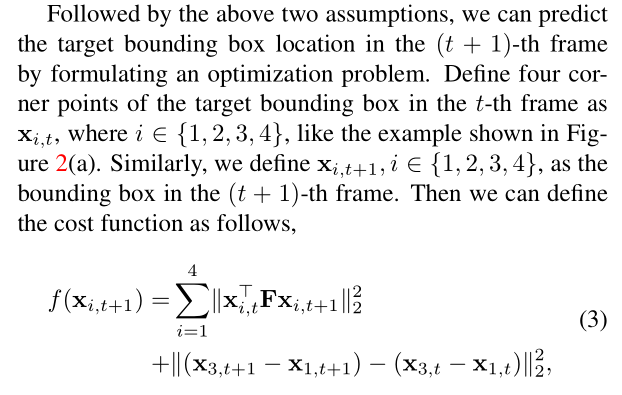

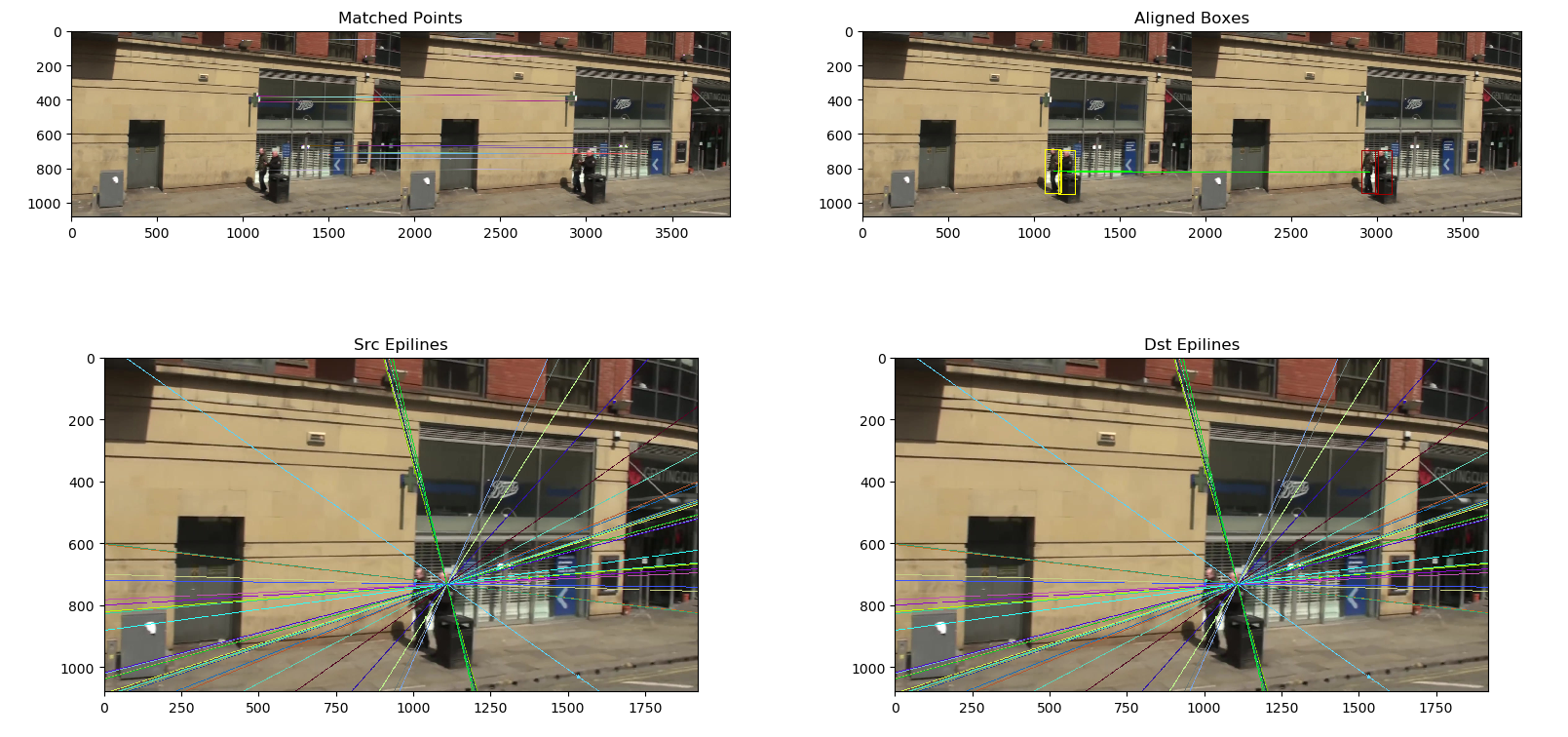

我们可以看到,作者将行人运动和相机运动结合了,其中目标函数的第一部分是利用了对极几何中本质矩阵F的性质,相关的理论推导可以看下图:

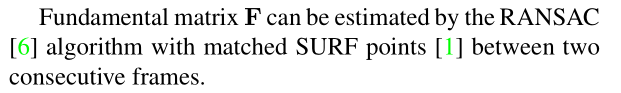

其中x表示的目标框的四个顶点的坐标信息,第二部分中作者则是假设两帧中的同一目标的形状近似不变。因此我们只需要求得本质矩阵F,即可根据上一帧目标框信息,利用最小二乘法求得下一帧目标框信息。关于本质矩阵F的求解,作者提到基于SURF特征点提取和Ransac采样进行估计。

不过作者也没有给出详细的实现过程,我这里试着做一下理论推导。首先由于作者在目标函数中要求了目标框形状的一致性,那么我们不妨直接把下一帧目标框的形状信息看做已知的。其次,我们先假设本质矩阵F已经被估计出来了,这个矩阵是3x3的形状,那么为了推导方便,我这里做一个假设:

对于第t帧的任意一个目标框的每一个节点$x$,这里由于是三维的几何信息,所以添加一个z轴坐标,令$x^TF$为一个已知的三维向量,那么一个目标框就存在四个这样的三维向量,不妨看作一个4x3的矩阵M

那么就可以将目标函数展开,这里面的(w,h)为已知信息,(x,y)为下一帧目标框的左上角坐标:

$$ \begin{array}{l} \left\{ \begin{array}{l} {M_{11}}x + {M_{12}}y + {M_{13}} = 0\\ {M_{21}}\left( {x + w} \right) + {M_{22}}y + {M_{23}} = 0\\ {M_{31}}x + {M_{32}}\left( {y + h} \right) + {M_{33}} = 0\\ {M_{41}}\left( {x + w} \right) + {M_{42}}\left( {y + h} \right) + {M_{43}} = 0 \end{array} \right.\\ \Rightarrow \left[ {\begin{array}{*{20}{c}} {{M_{11}}}&{{M_{12}}}\\ {{M_{21}}}&{{M_{22}}}\\ {{M_{31}}}&{{M_{32}}}\\ {{M_{41}}}&{{M_{42}}} \end{array}} \right]\left[ {\begin{array}{*{20}{c}} x\\ y \end{array}} \right] = \left[ {\begin{array}{*{20}{c}} {{M_{13}}}\\ {{M_{23}}{\rm{ + }}w{M_{21}}}\\ {{M_{33}}{\rm{ + }}h{M_{32}}}\\ {{M_{43}}{\rm{ + }}w{M_{41}} + h{M_{42}}} \end{array}} \right] \end{array} $$

很明显这就是一个典型的Ax=b问题,后面的问题就迎刃而解了。

2.2 实验分析

为了保证效率,我这里采用ORB特征提取策略,然后采用brute force的匹配策略:

1 | class Epipolar(object): |

那么对于本质矩阵的估计和最小二乘法的应用,都可以直接利用已有的工具箱opencv和numpy搞定:

1 | def GetFundamentalMat(self, keypoints1, descriptors1, keypoints2, descriptors2): |

具体效果如下:

上面极线的法线也正好是车载相机的方向所在,可以看到第一章的示例问题被很大缓解了:

3ECC

3.1原理介绍

第二章所介绍的对极几何方法,由于我们只是根据二维信息对三维信息的估计,所以也会存在误差。这一张我们也讲一个简单有效的方案,那就是“仿射变换”。当然,并不是我们所理解的那种仿射变换,具体细节我将慢慢介绍。

第一次看到ECC算法,我是在ICCV2019的Tracktor++[3]中,不过作者只是一笔带过,没有提及如何实现。ECC算法全名是增强相关系数算法[2],来自于PAMI2008的一篇论文,这个算法适用于图像配准任务的:

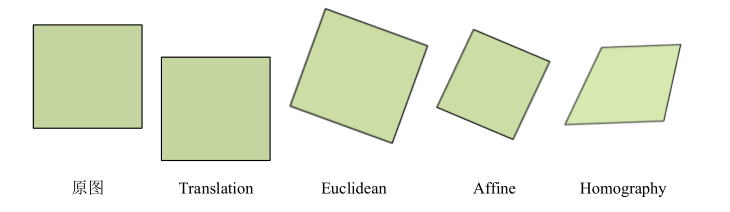

也就是对于两张内容差异小,但是存在光照、尺度、颜色、平移等变换影响的图像,将二者对齐。ECC算法本质是一个目标函数:

$$ ECC = {\left\| {\frac{{{x_i}}}{{\left\| {{x_i}} \right\|}} - \frac{{{y_i}}}{{\left\| {{y_i}} \right\|}}} \right\|^2},y = warp(x) $$

当然这只是一个原始形式,在求解过程中有所调整,我就不细讲这里的理论了。可以注意到的是y=warp(x)这个函数,所以这个算法假设两帧图像之间存在某种变换,不一定是仿射变换,可能有以下几种:

其中最后一种透视变换的矩阵形式是:

$$ \left[ {\begin{array}{*{20}{c}} {x'}\\ {y'}\\ {z'} \end{array}} \right] = \left[ {\begin{array}{*{20}{c}} {{a_{11}}}&{{a_{12}}}&{{t_x}}\\ {{a_{21}}}&{{a_{22}}}&{{t_y}}\\ {{a_{23}}}&{{a_{24}}}&{{t_z}} \end{array}} \right]\left[ {\begin{array}{*{20}{c}} x\\ y\\ z \end{array}} \right] $$

前三种变换则不考虑最后一行信息,即2x3的矩阵形式。

3.2实验分析

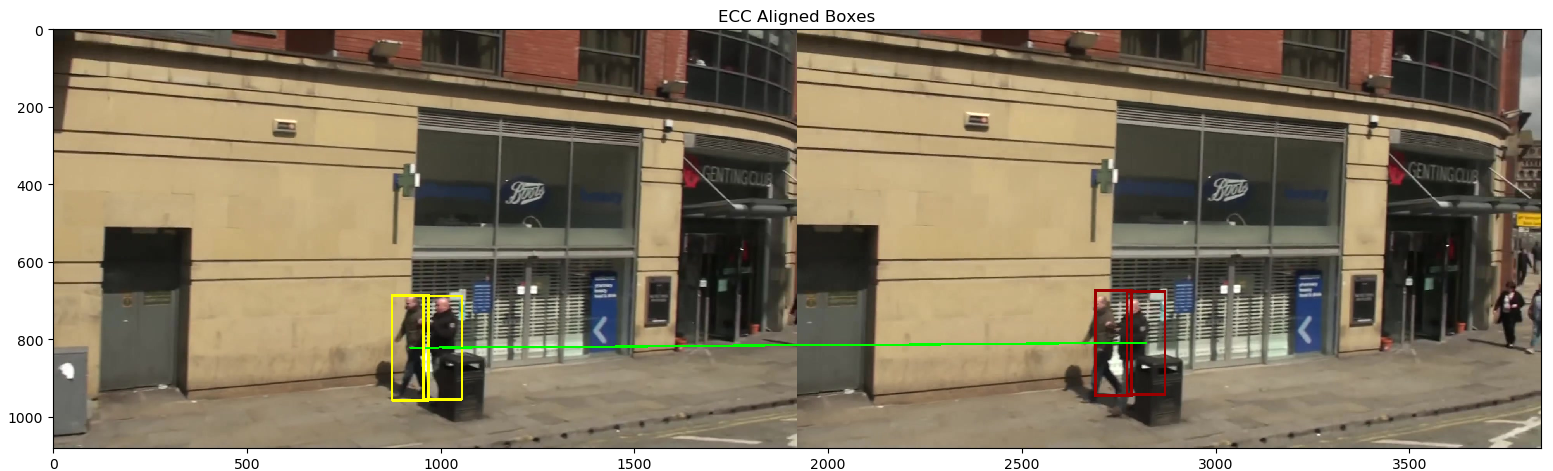

opencv中正好提供了ECC相关的功能函数,这里我们只需要再次封装,以方便多目标跟踪。可以知道的是ECC算法的核心在于变换矩阵的求解:

1 | def ECC(src, dst, warp_mode = cv2.MOTION_EUCLIDEAN, eps = 1e-5, |

这里面我添加了一个技巧,由于ECC算法针对的是两幅图,所以图像的尺寸对于算法求解速度的影响很大。因此这里我根据变换矩阵的形式,设计了一种可以根据尺度放缩自动调节的简易算法。效果如下:

效果也很好,值得一提的是,ECC算法只需要大约几毫秒的时间,但是由于它的求解效率跟变换的难度相关,所以间隔越久越慢,而对极几何的方法效率比较稳定,不过就很慢了。

4 其他近似方案

4.1光流

上面我介绍的都是近两年关于相机运动的针对性解决方案,那么实际上在有一些算法模型中,如果场景变化不剧烈,并不特别需要用到运动模型。比如基于光流法的多目标跟踪算法,这里众所周知的就是ICCV2015的NOMT[5]算法。

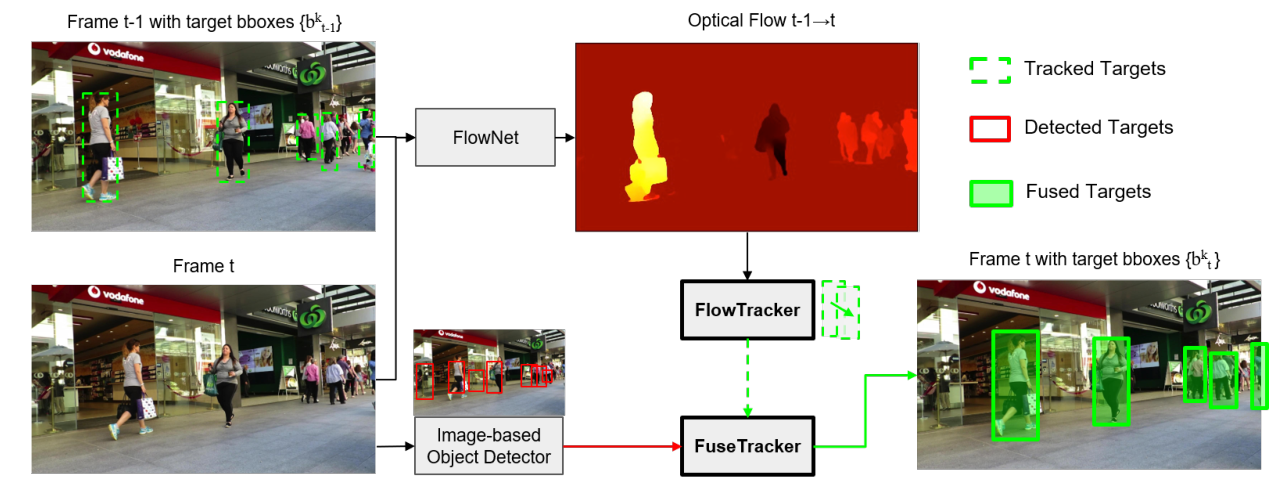

作者用的是一种简化版的快速光流法,那么更形象的可以看今年刚出的一篇论文《Multiple Object Tracking by Flowing and Fusing》,具体我就不说了,就是简单的在Tracktor++框架上加了一个光流预测分支:

可以看到的是,光流也是在捕捉相邻帧中相似的像素信息,这一点跟第二章中提出的两种相机运动模型有点类似,所以不需要显式使用相机运动模型。

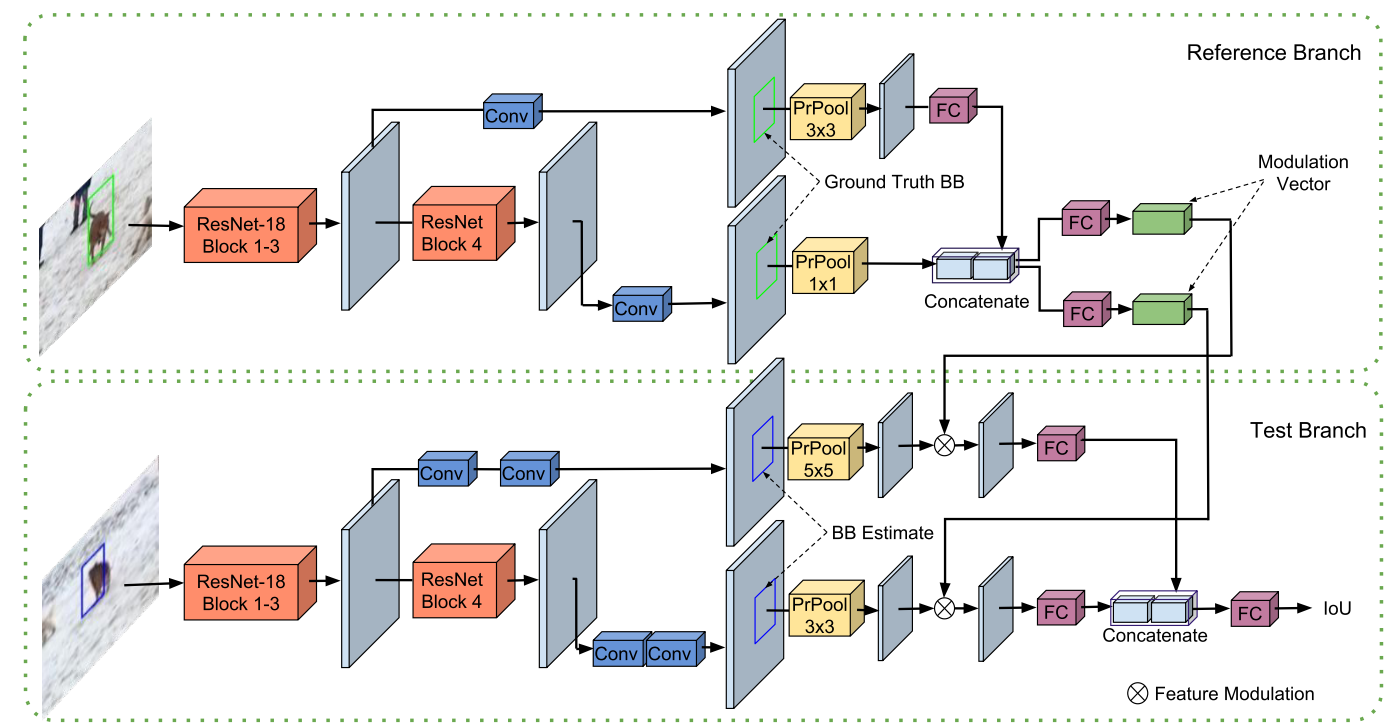

4.2SOT

而基于SOT的方法,无论是使用传统的相关滤波算法还是使用Siamese类深度学习框架,都会在上一帧目标周围1.5~2.5倍区域搜索下一帧的目标,这里面会显式或者隐式用到特征的比对。只不过不同于上面的像素比对,这里是更加高层的特征比对。

参考资料

[1] Wang G, Wang Y, Zhang H, et al. Exploit the connectivity: Multi-object tracking with trackletnet[C]. in: Proceedings of the 27th ACM International Conference on Multimedia. 2019. 482-490.

[2] Evangelidis G D, Psarakis E Z. Parametric image alignment using enhanced correlation coefficient maximization[J]. IEEE transactions on pattern analysis and machine intelligence, 2008, 30(10): 1858-1865.

[3] Bergmann P, Meinhardt T, Leal-Taixe L. Tracking without bells and whistles[C]. in: Proceedings of the IEEE International Conference on Computer Vision. 2019. 941-951.

[4] Choi W. Near-online multi-target tracking with aggregated local flow descriptor[C]. in: Proceedings of the IEEE international conference on computer vision. 2015. 3029-3037.

[5] Feng W, Hu Z, Wu W, et al. Multi-object tracking with multiple cues and switcher-aware classification[J]. arXiv preprint arXiv:1901.06129, 2019.